What if AI didn’t just assist — but collaborated the way the best human teams do? That future is not a prediction. It’s already shipping in production.

imagine assembling a crack team for a complex project. You’d want a strategist to plan the work, a researcher to gather intelligence, a specialist to execute the technical pieces, and a reviewer to catch what everyone else missed. The magic isn’t in any one person — it’s in how they communicate, divide labor, and improve on each other’s work. Now imagine that team operating at software speed, without lunch breaks, scaling instantly from one project to a thousand. That’s the promise of multi-agent AI systems — and in 2026, it’s becoming reality.

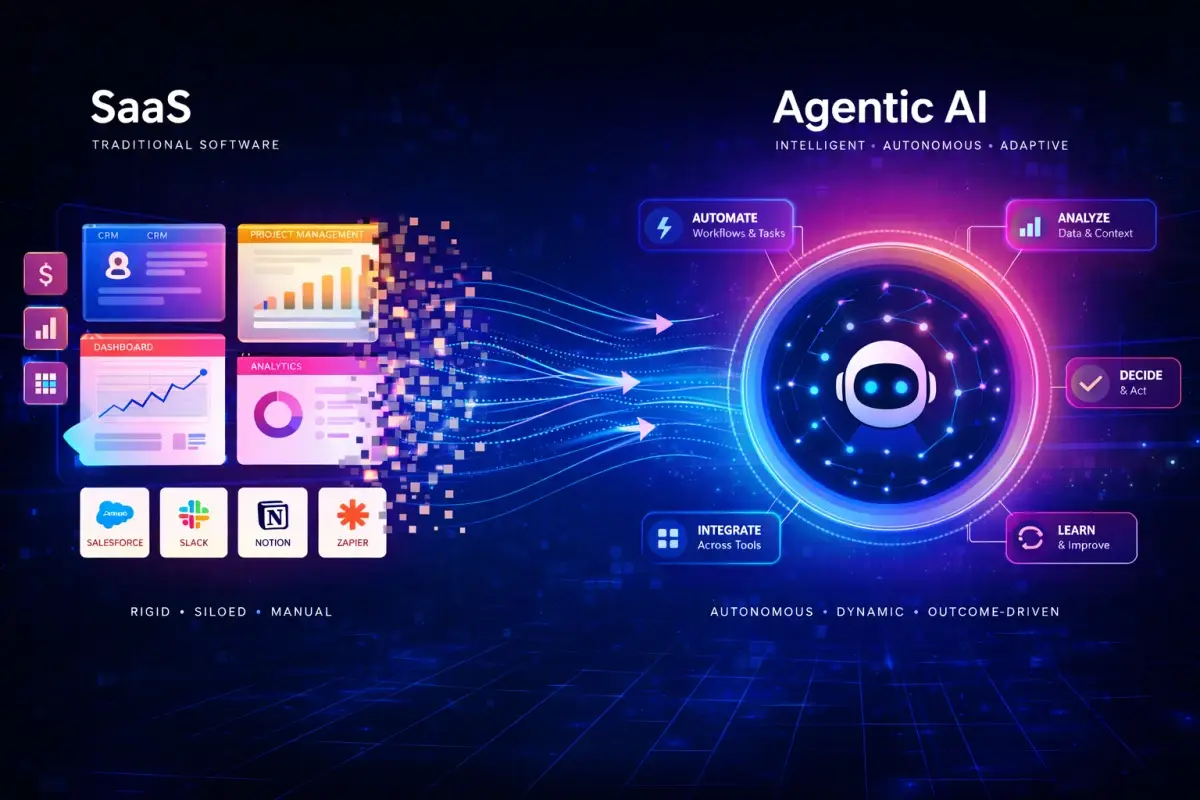

For years, AI progress was measured by what a single model could do: how well it could answer a question, write a paragraph, or generate an image. Those benchmarks still matter, but they’ve begun to feel limiting. The most consequential frontier now lies not in what one AI can do alone, but in what several AI agents can accomplish together — specializing, communicating, checking each other’s work, and pursuing goals that no single system could reach on its own.

This shift is being driven by research and engineering advances at OpenAI, Google DeepMind, Anthropic, and a fast-growing ecosystem of startups building the orchestration infrastructure that makes agent collaboration possible. What emerges from that work will change not just how we build software, but how knowledge work itself gets done. This article explains what multi-agent systems are, how they work, where they’re already being deployed, and what they mean for businesses and individuals who want to stay ahead of the curve.

What Are Multi-Agent Systems?

A multi-agent system (MAS) is an architecture in which multiple distinct AI agents work together — each with defined roles, capabilities, and responsibilities — toward shared or complementary goals. Rather than routing every task through a single general-purpose model, a multi-agent system decomposes work across a network of specialized agents that communicate, coordinate, and collaborate to produce results neither could achieve alone.

The key word is “together.” These are not simply multiple AI tools running in parallel without awareness of each other. Agents in a well-designed system exchange information, build on each other’s outputs, flag errors for peer review, and adapt their behavior based on what other agents are doing. The system is genuinely collaborative — not just concurrent.

How They Differ From Single AI Agents

A single AI agent, however capable, faces inherent limitations. Its attention is finite. Its specialization is necessarily generalized. It cannot simultaneously focus on high-level strategy while executing precise technical subtasks. And when it makes a mistake, there’s no peer review mechanism to catch it before the error propagates downstream.

Multi-agent systems address each of these constraints directly. Specialization becomes possible because different agents can be optimized — through fine-tuning, system prompts, or tool access — for different kinds of work. Error correction improves because reviewer agents can audit the outputs of executor agents independently. And throughput scales because agents can work on different subtasks simultaneously rather than sequentially.

The Company Team Analogy

How Multi-Agent Systems Work

Understanding the mechanics of agent collaboration is essential to understanding what makes these systems powerful — and where they can fail. Four core mechanisms drive the operation of most production multi-agent architectures.

Role Assignment and Specialization

Each agent in the system is given a defined function — through system prompts, fine-tuned weights, or tool restrictions. The orchestrator knows which agent handles which class of task and routes work accordingly. This division of labor is what enables specialization at speed: a coding agent optimized for Python doesn’t waste cycles trying to write marketing copy.

Communication and Coordination

Agents exchange structured messages — task descriptions, outputs, feedback, and status updates — through shared communication channels managed by the orchestration layer. This communication can be synchronous (agent A waits for agent B’s output before proceeding) or asynchronous (agents work in parallel and reconcile results at defined checkpoints).

Shared Memory and Context

For agents to collaborate meaningfully, they need access to shared state — a record of what has been done, what has been decided, and what is still pending. Modern multi-agent frameworks implement persistent memory systems (databases, vector stores, session contexts) that all agents can read from and write to, maintaining coherence across the lifecycle of a complex task.

Feedback Loops and Iteration

The best multi-agent systems don’t just execute — they evaluate. Reviewer agents assess the outputs of executor agents against defined quality criteria, returning feedback for revision. This creates iterative improvement cycles that progressively refine outputs toward higher quality, mimicking the peer review processes that make human teams more reliable than individuals.

Key Technologies Behind Multi-Agent Collaboration

Multi-agent systems don’t emerge from a single technological breakthrough. They are the product of several converging capabilities that have each reached sufficient maturity in the last two years to make real-world deployment tractable.

Real-World Use Cases of Multi-Agent Systems

The most clarifying way to understand multi-agent systems is to look at where they’re actually being deployed — not in research labs, but in production environments where the goal is real business value.

Autonomous Business Operations

Multi-agent systems are handling end-to-end business workflows: a planner agent breaks down incoming customer requests, routing agents assign them to specialist handlers, resolution agents draft responses, and quality agents review outputs before delivery. The entire customer service pipeline — from intake to resolution — can operate with minimal human touch on routine cases.

Software Development Teams

Agent-based dev teams are becoming real: a product agent translates requirements into technical specifications, a coding agent writes the implementation, a test agent writes and runs unit tests, a security agent scans for vulnerabilities, and a documentation agent drafts the README. The code review cycle that once required multiple engineers can be executed in minutes by an agent ensemble.

Research and Data Analysis

Complex research tasks — literature review, competitive intelligence, market analysis, scientific synthesis — are well-suited to multi-agent approaches. A search agent gathers sources, a reading agent extracts key findings, a synthesis agent identifies patterns and conflicts, and a writing agent produces structured reports. The entire process that would take a human team days can be compressed to hours.

Gaming and Simulation Environments

Multi-agent systems have long been used in game AI and simulation, but the applications have deepened dramatically. AI agents interacting in simulated environments generate training data for reinforcement learning, model complex social and economic systems, and test the behavior of autonomous systems in controlled settings before real-world deployment. DeepMind has used multi-agent simulation extensively in its game-playing and safety research.

Multi-Agent Systems vs Human Teams

The analogy between AI agent teams and human teams is useful precisely because it has limits. Understanding where the comparison holds — and where it breaks down — clarifies both the power and the appropriate scope of multi-agent AI deployment.

| DIMENSION | MULTI-AGENT AI | HUMAN TEAMS |

|---|---|---|

| Specialization | Highly configurable; agents can be precisely tuned to specific task types with consistent behavior across every instance | Deep specialization takes years to develop; varies significantly between individuals |

| Communication | Structured, lossless, instantaneous; agents exchange exactly what they’re designed to exchange | Rich but lossy; tone, context, and politics all affect what actually gets communicated |

| Scale and Speed | Essentially unlimited; a multi-agent system can spin up hundreds of instances in seconds | Fundamentally constrained by headcount, time zones, and human working hours |

| Creativity | Pattern-based; excellent at recombining existing knowledge, weaker at genuinely novel conceptual leaps | Humans remain the source of the most original thinking, especially in novel problem spaces |

| Ethical Judgment | Rules-based and context-constrained; struggles with genuinely ambiguous situations requiring moral reasoning | Humans bring lived experience, empathy, and values-based judgment to complex decisions |

| Strategic Vision | Excellent at executing toward a defined strategy; weaker at forming novel strategic insights about competitive dynamics and organizational identity | Long-term strategic thinking — particularly involving organizational culture and human relationships — remains a distinctly human domain |

The most productive framing is not “AI teams versus human teams” but “what kind of team gets the best results when AI agents and humans work together?” The answer varies by task — and the skill of knowing which tasks to delegate to agents is itself becoming a crucial human competency.

The Companies Driving This Shift

Multi-agent systems don’t emerge from a single lab or a single product decision. They are the cumulative output of a distributed ecosystem of research, engineering, and entrepreneurship — each contributor accelerating the others.

OpenAI: Ecosystem Leader

OpenAI’s contributions to multi-agent infrastructure have been extensive: the Assistants API introduced persistent memory and tool use at scale; the Swarm framework demonstrated lightweight agent orchestration patterns; and GPT-4o’s function-calling capabilities made it practical to build agents that interact with external systems. OpenAI’s developer community — the largest in the AI space — has generated a dense ecosystem of third-party agent frameworks built on its models. The company’s approach favors rapid iteration and broad developer access over any single opinionated architecture.

DeepMind: Research Depth

Google DeepMind’s contributions are concentrated in the research foundations that make sophisticated agent behavior possible: multi-agent reinforcement learning, self-play training, emergent communication between agents, and simulation-based testing environments. Its work on agent behavior in game environments — from AlphaStar to complex multi-agent simulations — has produced insights about coordination and competition that are now being transferred to real-world agent systems. Gemini’s multimodal capabilities give DeepMind-powered agents a perceptual advantage over text-only systems.

Startups: The Builder Layer

The most consequential near-term acceleration is happening in the startup layer. Companies like Crew AI, LangChain, Zapier (with its AI automation layer), and dozens of vertical-specific agent builders are translating research capabilities into deployable products accessible to businesses without AI engineering teams. No-code and low-code agent platforms are expanding access to multi-agent architectures beyond the developer community, enabling operations managers, marketing teams, and small business owners to deploy agent workflows without writing a line of code.

The Future of AI Collaboration

The trajectory of multi-agent systems over the next several years follows a clear direction, even if the precise timeline and specific form are uncertain. Three broad phases are visible on the horizon.

How to Leverage Multi-Agent Systems Today

The strategic window for early adoption is open — but it won’t remain open indefinitely. Organizations and individuals who develop multi-agent fluency now will have compounding advantages over those who wait until the technology is mainstream.

Tools and Platforms

Best Practices for Implementation

Start Small and Specific

Resist the temptation to automate everything at once. Choose a single, well-defined workflow with clear inputs, outputs, and quality criteria. A multi-agent system for a narrow use case you understand deeply will teach you more — and produce more value — than an ambitious broad system that fails at every seam. Prove the pattern, then expand it.

Design for Observability First

Multi-agent systems fail in non-obvious ways. Before you can debug them, you need to see them. Instrument every agent interaction — log what each agent received, what it decided, and what it produced. Tools like LangSmith and Helicone provide agent-level tracing. Observability isn’t a feature to add later; it’s the prerequisite for operating these systems reliably.

Build in Human Checkpoints

Fully autonomous execution is appropriate for low-stakes, high-volume, reversible tasks. For anything consequential — customer-facing communications, code deployed to production, financial transactions — keep human review at defined checkpoints. Design your agent system with explicit handoff points where a human can inspect, approve, or redirect before the next phase executes.

Iterate on Role Design

The most common failure mode in early multi-agent systems is poor role definition — agents with overlapping responsibilities, unclear handoffs, or scope so broad that specialization provides no benefit. Treat your agent role design as a product design problem: user-test it, identify where the seams break, and refine the boundaries until collaboration genuinely improves on what a single agent could do alone.

Measure Rigorously

Multi-agent systems are expensive to run. Without measurement, it’s easy to spend heavily on infrastructure that produces marginal value over simpler approaches. Define your quality metrics and cost-per-task targets before deployment, measure continuously, and be willing to simplify: a two-agent system that achieves 90% of the quality at 30% of the cost of a six-agent system is usually the right answer.

Conclusions

Multi-agent systems represent something genuinely new in the history of software: the first time we’ve been able to build computational systems that collaborate on complex cognitive work the way human teams do — with specialization, communication, peer review, and iterative improvement. The architecture is not a gimmick. It addresses fundamental limitations of single-agent AI in a way that the underlying model scaling alone cannot.

The companies leading this shift — OpenAI with its ecosystem breadth, DeepMind with its research foundations, Anthropic with its safety-focused infrastructure, and a fast-growing startup layer building the tools that democratize access — are collectively creating the conditions for a new kind of work. Not AI as a tool you pick up and put down, but AI as a collaborating member of a team that operates continuously, learns incrementally, and scales on demand.

The question for businesses and individuals isn’t whether to engage with multi-agent systems. It’s how quickly to develop the understanding and the workflows to use them well. The teams — human or hybrid — that figure that out first will have advantages that compound over time.

The era of the solo AI assistant is giving way to the era of the AI team. Learning to lead, design, and collaborate with that team is the defining professional skill of the next decade.